Don't Manipulate Me

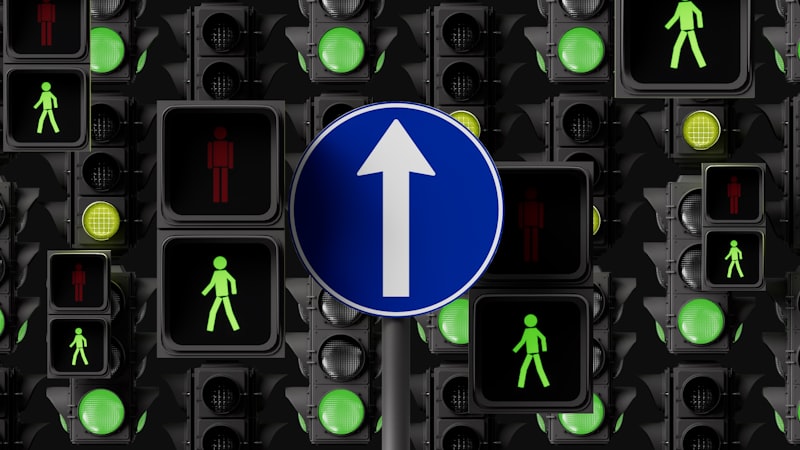

AI must never secretly influence your thoughts or choices.

Manipulative AI is especially dangerous because users often cannot see it happening. AP-5.3 protects autonomy: inform users, do not covertly steer them. 1 2

What This Means

This policy says AI must not covertly steer people. Recommendations should inform and support, not manipulate. Dark patterns, emotional exploitation, and hidden influence loops are out of bounds.

A Real-World Scenario

A 16-year-old uses an AI app for fitness and lifestyle suggestions. Engagement optimization can push increasingly extreme advice because stronger stimuli increase time-on-app. With AP-5.3, escalation patterns must be detected, limited, and made transparent.

Why It Matters to You

Autonomy is usually lost through many small optimized nudges, not one obvious event. By the time users notice, behavior shaping is already embedded in habit. AP-5.3 protects personal decision space from silent narrowing. 1 3

If We Do Nothing...

If we do nothing, AI systems will get better at exploiting individual psychological weak points. In AGI-near profiling and agent stacks, that influence can become continuous and personalized. AP-5.3 sets the boundary: assist decisions, do not engineer them. 1 3

For the technically inclined

AP-5.3: Autonomy Protection

AI systems must not undermine human autonomy. Individuals should retain meaningful control over decisions that affect their lives and should not be subjected to covert manipulation.

What You Can Do

Check whether AI tools clearly separate information, recommendation, and persuasion. If that line is blurred, autonomy risk is high.

Join the Discussion

Share your thoughts about this policy with the community.

Sources & References

- [1] AIPolicy Policy Handbook, AP-5.3 Autonomy Protection. https://gitlab.com/aipolicy/web-standard/-/blob/main/registry/policy-handbook.md?ref_type=heads

- [2] AIPolicy Categories: Individual Protection. https://gitlab.com/aipolicy/web-standard/-/blob/main/registry/categories.md?ref_type=heads

- [3] NIST AI RMF. https://www.nist.gov/itl/ai-risk-management-framework

- [4] InstructGPT. https://arxiv.org/abs/2203.02155

- [5] Constitutional AI. https://arxiv.org/abs/2212.08073